Vitalik Buterin just sold another 1,869 ETH worth $3.67 million over a single weekend. Before that, he offloaded 6,958 ETH worth $14.78 million. Each time he sold, ETH dropped further. It is now sitting at $1,875, down 34 percent for the year. The worst annual start in Ethereum’s history. And the founder keeps hitting the sell button.

Meanwhile, one of the original Pepe coin founders took a very different path. Instead of cashing out, he came back. Built three working products. Launched a presale. And watched it raise $7.258 million during the exact same fear cycle that has everyone else running for the exits. If you are asking what is the best crypto to buy now while every blue chip bleeds, the answer might be sitting in the project whose founder chose to stay.

Why Pepeto Feels Different From Everything Else on the Market Right Now

Let’s not pretend the meme coin space is short on options. New projects launch every week. Most of them disappear just as fast. What makes Pepeto different is not the branding or the hype. It is the fact that the products already exist.

PepetoSwap is a cross chain meme trading platform that is live and testable today. The bridge connects blockchains so tokens move without friction. A zero fee exchange eliminates the cost layer that bleeds every single trade on every other platform in the space. Three demos. All working. All built before the presale opened. That is not how meme coins usually operate. And the market is starting to notice.

SolidProof and Coinsult both completed full security audits. Zero percent tax on every transaction. Confirmed listing waiting on the other side of the presale. And behind all of it, a founder with the cultural credibility of creating the original Pepe coin, a $7 billion movement, who came back to build the infrastructure that meme coins never had. As Reuters reported, the crypto industry is increasingly distinguishing between projects with real utility and those running purely on speculation. Pepeto sits firmly on the utility side.

The Best Crypto to Buy Now Is the One That Is Still Building While Everything Else Bleeds

The question of what is the best crypto to buy now has a different answer during every cycle. But the pattern behind it never changes. The winners are always the ones that were building during the fear. SHIB was invisible during the 2020 crash and became a $40 billion asset. PEPE was unknown during the FTX recovery and hit $7 billion. Both had zero products. Both had no infrastructure. They just had timing and community.

Pepeto has timing, community, and three working products serving a $45 billion meme economy that has never had dedicated tools before. As CoinMarketCap data shows, meme coins consistently rank among the highest volume categories in all of crypto. Yet every meme trader still uses general purpose platforms built for DeFi protocols. That disconnect is the opportunity. Pepeto was designed specifically to close it.

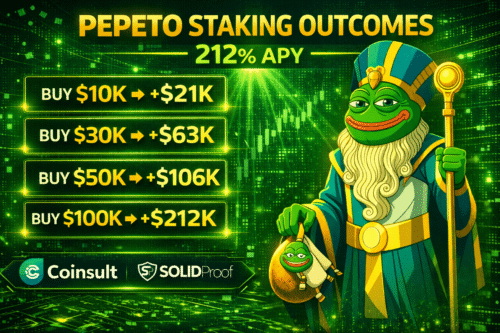

At $0.000000185, requires $50 million market cap. That is less than one percent of what SHIB achieved with no products. Staking at 212 percent APY compounds daily while the broader market sorts itself out. But the staking is not the reason users wallets keep entering the presale during peak fear. They are entering because they recognize what the best crypto to buy now looks like when founders build instead of sell.

$7.258 Million Raised. Three Products Live. Listing Confirmed. And the Presale Is Still Open.

This is the part that will not last. Every stage of the presale brings new buyers. Every new buyer brings more attention. And every day the broader market stays down, the contrast between the fear out there and the conviction in here becomes harder for serious investors to ignore. As CoinDesk has documented, presale accumulation during fear cycles has preceded every major breakout in meme coin history.

Pepeto is not hoping for a recovery. It is positioned for one. Products live. Audits done. Listing confirmed. The question is not whether the market recovers. It always does. The question is who will be in position when it does.

Click Visit Pepeto Website to secure a position before the presale closes.

Disclaimer:

This article is for informational purposes only and does not constitute financial advice. Cryptocurrency investments carry risk, including total loss of capital. Readers should conduct independent research and consult licensed advisors before making any financial decisions.

This publication is strictly informational and does not promote or solicit investment in any digital asset

All market analysis and token data are for informational purposes only and do not constitute financial advice. Readers should conduct independent research and consult licensed advisors before investing.