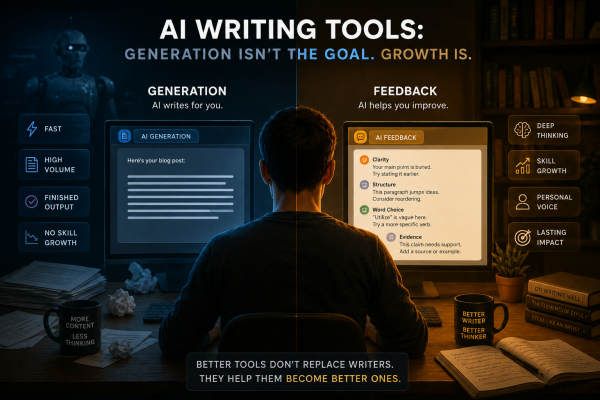

There is a quiet assumption sitting at the heart of most AI writing tools today, and almost nobody is questioning it. The assumption is this: the goal of writing is to produce text, and therefore the goal of an AI writing tool should be to produce text faster, more easily, and in greater volume. From that premise, the entire industry has built itself around generation. Type a prompt, receive a paragraph. Describe a blog post and receive a blog post. Need an email, a report, or a cover letter? Here it is, already written.

This is not a small design decision. It reflects a fundamental belief about what writing is for and what writers actually need. And for a growing number of educators, writers, and thinkers who care about what happens to human skill over time, this belief is worth pushing back on seriously.

The Confusion Between Output and Ability

When most people think about improving as a writer, they are not imagining a faster way to produce sentences. They are imagining a sharper ability to think clearly, to organize ideas, to notice when something is vague, to find the right word after rejecting the wrong five. Writing improvement is a process of developing internal capacities. It is slow, sometimes frustrating, and deeply personal.

AI generation tools offer something entirely different. They offer output without process. You receive the finished product without going through the difficulty that makes you better. And while that finished product may be useful in the short term, it does nothing to develop the capacity that produced it. In fact, by removing the difficulty, it may quietly erode whatever capacity already exists.

This is the distinction that matters: output is not the same as ability. A student who submits a generated essay has a document. A student who struggled through five drafts, received structured feedback, revised their argument, and reconsidered their word choices has something different. They have grown. Tools that optimize for output are not building writers. They are replacing them.

Why Speed Became the Metric

It is worth asking how speed and generation became the dominant values in this space. The answer is partly economic and partly cultural. In content marketing, speed and volume have real commercial value. A team that can publish fifty articles a month is more visible than one that publishes five. In that context, a tool that generates drafts quickly solves a genuine problem.

The issue is that this logic traveled far beyond content marketing. It spread into education, into professional writing, into personal communication, and into academic research. And in most of those contexts, the goal is not volume. A student writing an argumentative essay does not need to produce more essays. A professional writing a strategic memo does not need to write it faster. A researcher drafting a paper does not need an AI to handle the thinking. In each of these cases, the value is in the process, not the output rate.

Most AI writing tools were not built with this distinction in mind. They were built for one kind of problem and then applied to every kind of problem. The result is a mismatch between what the tools offer and what writers, students, and professionals actually need in order to grow.

What Gets Lost When Process Disappears

Writing is one of the clearest windows into how someone thinks. When a person writes a difficult paragraph, they are not just arranging words. They are making decisions about what matters, what order ideas belong in, what the reader needs to understand, and what can be left unsaid. These decisions require judgment. Judgment requires practice. Practice requires doing the hard thing, not having the hard thing done for you.

When AI generation removes that process, it does not just save time. It removes the occasion for judgment to develop. Over many repetitions, this compounds. A student who relies on generation through four years of schooling arrives in professional life with weaker critical thinking skills than they might have had. A professional who delegates all written communication to an AI gradually loses the ability to articulate their own thinking with precision. These are not hypothetical risks. They follow naturally from the logic of what these tools do.

The more important question is not whether AI can write. It clearly can. The more important question is what we lose when we stop writing ourselves, and whether the convenience is worth that cost in every context where we are tempted to reach for it.

The Case for Feedback Over Generation

There is a different kind of AI writing tool that is worth building and worth using: one that engages with your writing rather than replacing it. Instead of generating a paragraph, it reads the paragraph you wrote and tells you where the logic breaks down, where the language becomes vague, and where the structure could be stronger. Instead of handing you a finished product, it pushes you to produce a better one yourself.

This is not a new idea in pedagogy. Teachers and writing coaches have known for a long time that feedback produces better writers than correction. When a teacher rewrites a student’s sentence for them, the student learns that their sentence was wrong. When a teacher asks the student why they chose that word, what they were trying to say, and whether there is a clearer way to say it, the student learns something about their own thinking. The result is a writer who improves rather than a document that looks better.

A tool like Thanis AI takes this approach seriously. Rather than generating or rewriting content on behalf of the user, it provides structured, specific feedback on what the writer has actually written. The goal is to help people improve their writing through reflection and revision, not to hand them something polished that bypasses their own thinking entirely. If you want to see what that difference looks like in practice, the comparison between Thanis and ChatGPT makes it concrete: two tools pointed at the same space but built around completely different assumptions about what writers actually need. In a landscape where nearly every other tool is rushing toward generation, this is a meaningfully different position.

The Responsibility That Comes With Scale

AI writing tools now reach millions of students and professionals. The choices these tools make about what to optimize for are not neutral. They shape habits, expectations, and, over time, human capacity itself. A generation of students trained to prompt rather than to write will carry those habits into every professional and intellectual context they enter.

This is not an argument against AI in writing. It is an argument for thinking carefully about which problems AI should solve and which problems it should help humans solve for themselves. The difference between those two things is enormous, and most of the industry has not been honest about it.

If you care about writing as a skill, about thinking as something worth preserving and developing, and about education as something more than credential production, then it is worth paying attention to which tools actually serve those goals. Generation at scale is impressive. But it is not the same as helping people become better at the thing that matters most: thinking clearly and expressing that thinking with precision, voice, and intention.

The tools optimizing for speed and volume are answering the wrong question. The right question is not “How do we produce more text?” It is how we help people write better, think more clearly, and develop the kind of judgment that no AI can hand them. That is a harder problem. It is also the one worth solving.